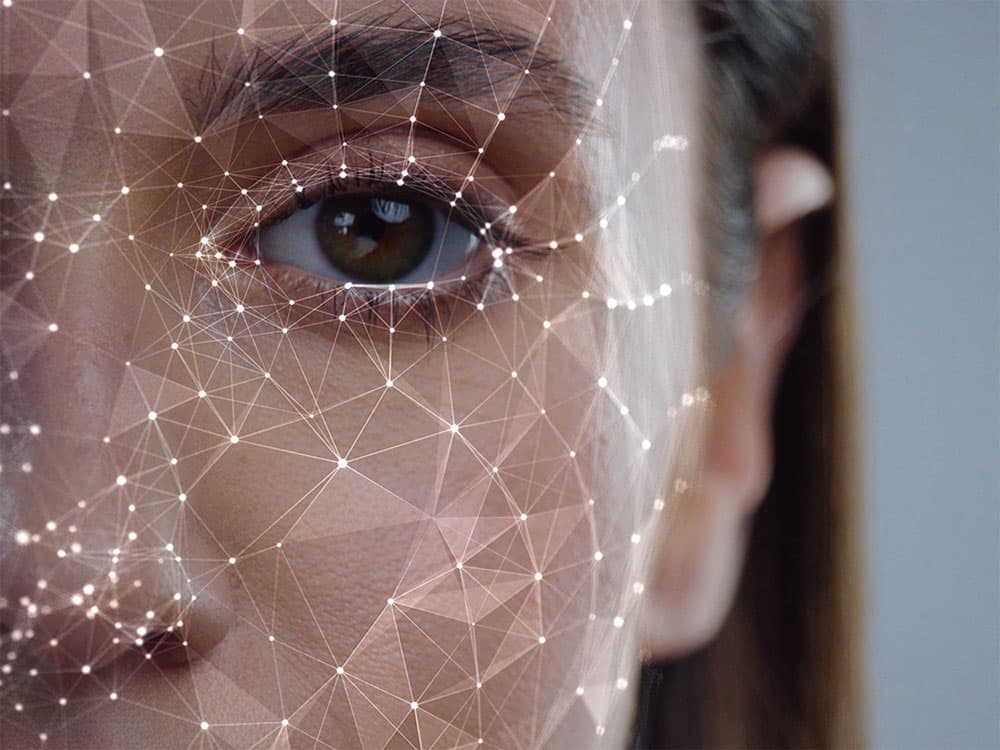

Face recognition technology focuses on identifying an individual through an image, or other audio-visual elements, of their face. This can prove a means of granting access to a particular system or service. Facial recognition has made impressive improvements in recent years. According to NIST, the National Institute of Standard and Technology, in 2020, a facial recognition algorithm was incorrect only 0.08% of the time.

These systems fall into the field of AI, as they are powered by deep learning. Deep learning functions via neural networks that are trained on immense numbers of examples of possible problems the system may face, making it possible for the model to identify patterns. Facial recognition systems pinpoint characteristics of a face that has been detected in the audio-visual element, i.e., the texture of the person’s skin.

Selfie ID verification – useful, but not 100% accurate

Selfie ID verification involves uploading a selfie, a snapshot of one’s own face, alongside a government-recognized photo ID (like a license) in order to conduct comparative verification. Today, we can simply hold our phones up to our faces and unlock them using facial verification. This is not even selfie verification anymore, because capturing the image is unnecessary as the lens conducts a live scan.

Although research has showcased high degrees of accuracy in face verification, tests tend to be conducted in ideal conditions: perfect lighting and positioning. In the real world, applications are not necessarily as ideal, meaning that accuracy rates drop. Additionally, if data regarding the individual’s face is several years old, it can be difficult for AI systems to recognize.

Real-world biases affect AI accuracy

An article from the New York Times explained how software is most accurate for Caucasian men, with correct verifications nearly 99% of the time, whereas darker-skinned individuals experienced errors 35% of the time. This research from M.I.T. depicts how racial biases from our social environments have even infiltrated artificial intelligence; it is evident that more Caucasian men were used than individuals of color, such as black women.

If we are racing ahead to use AI-operated facial recognition in various areas of our lives, should it not be possible for anyone to use these systems securely and accurately, regardless of race and gender? Hopefully, the empirical evidence revealing racial bias will be enough for future development to address the issue. Even now, the leading systems in facial recognition have disparities in their success rates when it comes to different genders and skin tones.

Since facial recognition is unlikely to become 100% accurate, measures against misidentification and abuse are necessary. If individuals can access other people’s personal information, including images of their faces, they can commit identity fraud. With completely remote account openings, a thief could even open a bank account in a victim’s name. Policymakers continue to work to regulate these systems so that businesses and governments can benefit from them securely. Ultimately, facial recognition is a biometric tool that can make online engagement easier, but as is often the case: verification should not be one-fold.